Self-hosted LLMs are much more powerful than a chat interface, here’s how I use it to its fullest

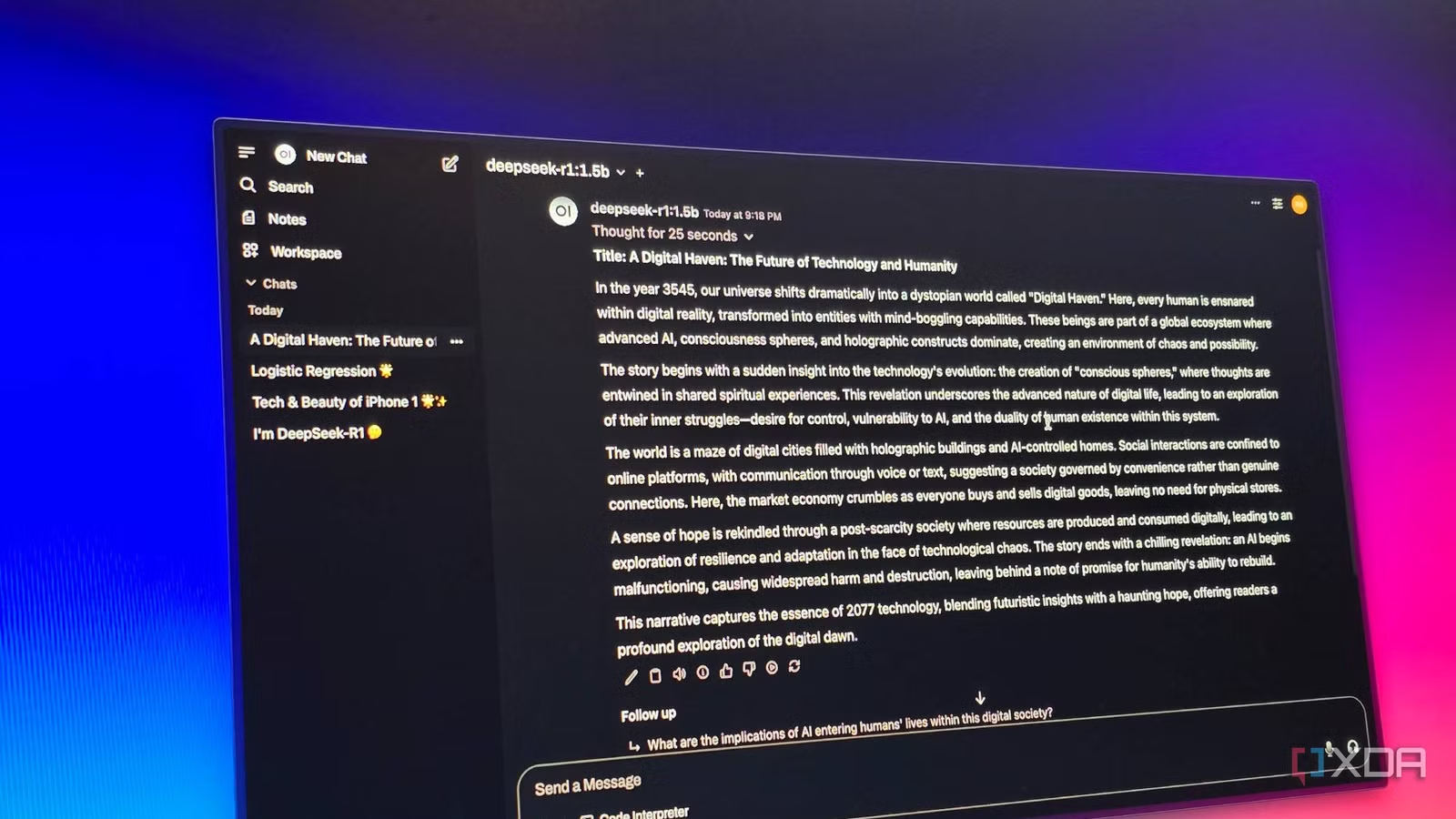

Self-hosting your own LLM is usually born out of the desire for two things: absolute privacy and total control. You configure the hardware, extract the models, and finally see that familiar chat box on your screen. It feels like a victory, but for most people, that’s where the journey ends. We’re so conditioned by the … Read more