As a home lab, I have always advocated running services on my local hardware instead of relying on cloud platforms. And my stance on FOSS tools didn’t change even after I started running large language models. If anything, I’ve grown to love the LLM 9B and 12B that I have deployed on my server nodes, because I don’t have to worry about external companies accessing all the documents and log files that I upload to my models.

But no matter how much I love my local models, they can’t hold up against the hundreds of billions of parameters that Claude, Perplexity, ChatGPT, and other cloud-based models can handle, especially for demanding coding tasks requiring huge pop-ups. Or at least that’s what I thought until I started using Qwen3.6-35B-A3B on my gaming PC. And let me tell you, this powerhouse of an LLM can compete with expensive cloud models for development workloads, while still running on my weak hardware.

Getting Qwen3.6-35B-A3B to work on my outdated GPU was a bit of a challenge

But with some tweaking, I got this beast of a model to generate over 20 tokens/s.

Before I talk about my experience with Qwen3.6, let me review the hardware aspect of my LLM hosting setup. As a broke lab, the RTX 3080 Ti is the fastest GPU in my arsenal, and it held up well enough to run 12B models, and even GPT-OSS-20B at tweaked settings. However, it only has 12GB of VRAM and, to be honest, it’s woefully outdated for LLM-powered tasks in 2026. In theory, it would be difficult to run a 27B model on this board, let alone something as large as an LLM 35B. But it turns out that not only is it possible to load Qwen3.6-35B-A3B on my old system, but it can even drive this LLM at a respectable 24 t/s token generation rate.

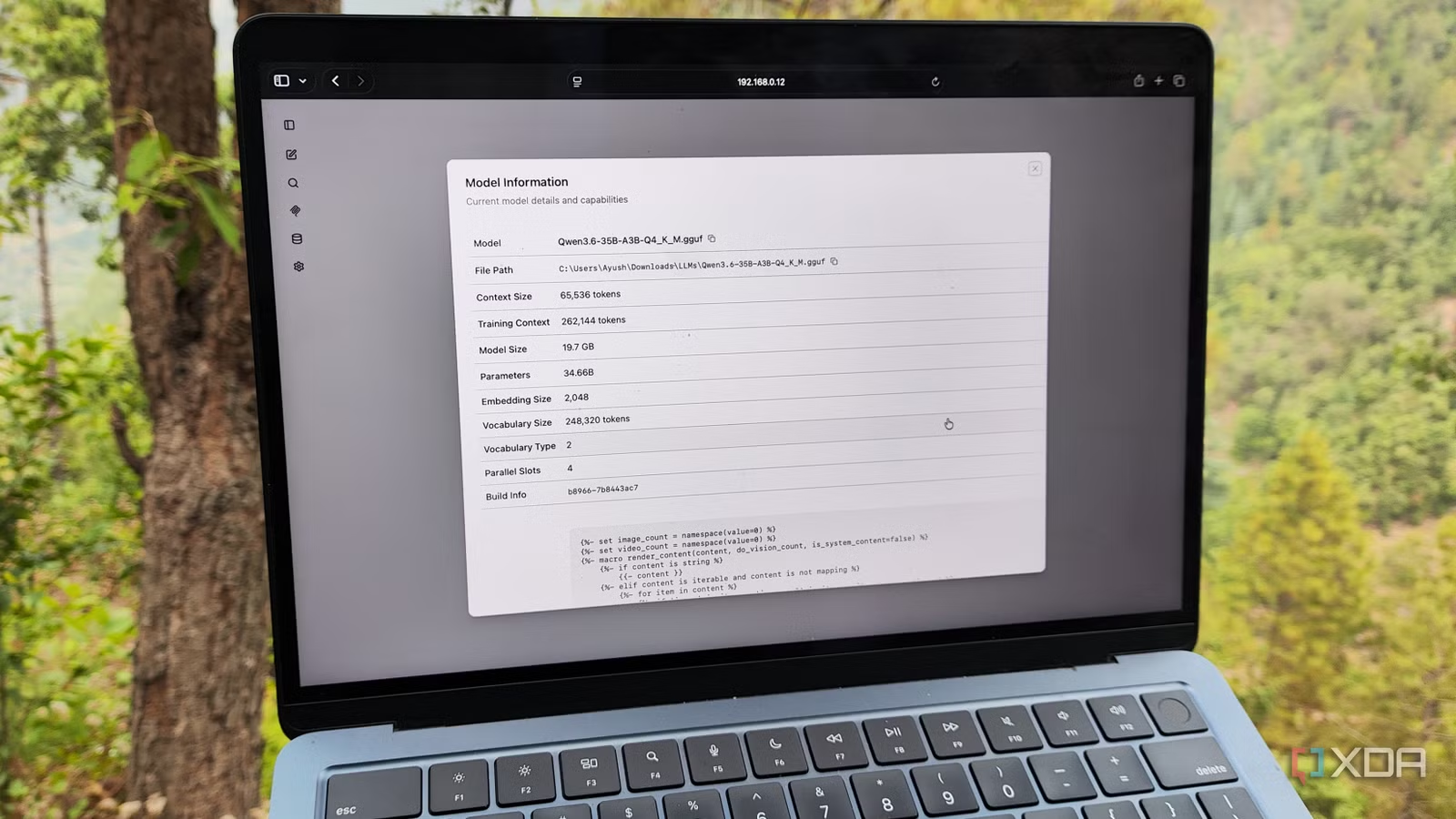

Since this is a mixed expert model, I can use the –n-cpu-moe flag to offload some expert weights to the CPU instead of forcing them onto my graphics card, while -ngl 999 ensures my GPU is used for KV cache and attention layers. Increase CPU threads via -t increases its computing prowess, but since I wanted enough context size for my coding tasks, I set -c has 65536. After asking our resident LLM maestro, Adam Conway, for some advice, I used the following command to get my llama server instance running with Qwen3.6:

llama-server.exe -m "C:UsersAyush.lmstudiomodelslmstudio-communityQwen3.6-35B-A3B-GGUFQwen3.6-35B-A3B-Q4_K_M.gguf" -c 65536 -ngl 999 --n-cpu-moe 30 -fa on -t 20 -b 2048 -ub 2048 --no-mmap --jinja

Just to test things out, I logged into the web UI created by llama-server and ran a quick query on XDA Developers. To my surprise, the LLM was able to achieve over 20 tokens/s, so I switched to Llama-bench to see how far I could go. The most my system could handle was a 16KB prompt length with -ctk set to style q8_0 quantization (although the setting -TV has style q8_0 would crash it).

llama-bench.exe -m "C:UsersAyushDownloadsLLMsQwen3.6-35B-A3B-Q4_K_M.gguf" -p 16000 -n 256 -ngl 999 --n-cpu-moe 32 -fa on -t 20 -b 2096 -ub 2096 -ctk q8_0

In fact, a review of system resources confirmed that my PC’s 32GB of RAM was the bottleneck with these commands. So if I could keep up with two more 16GB sticks (which might be plausible once the RAM apocalypse is over), I could push the popup and quantization settings even further. But since I wanted to test whether Qwen3.6 could replace its cloud counterparts for my specific tasks, it was time to pair it with my typical code editors, agent tools, and FOSS applications.

Qwen3.6-35B-A3B is a beast of an LLM

It’s a foolproof coding companion

Aside from my productivity tasks (which I’ll get to in a moment), I use LLMs extensively as coding companions. And no, I’m not talking about mood coding tasks either. Rather than letting clankers create applications, I use them to troubleshoot tasks, rearrange syntax, and autocomplete my code. Until now, I alternated between Qwen2.5-Coder and DeepSeek R1 for my coding tasks, and while they were good companions, I often had to run them multiple times and specify the context in great detail before I started offering useful suggestions.

Qwen3.6, on the other hand, gives solid troubleshooting advice from the very first prompt – to the point where it correctly identified where my Home Assistant was malfunctioning after pairing it with Claude Code and downloading the trigger YAML file and HASS logs. Hell, I even ran it through a few other terminal logs, and aside from two configs (which in all honesty didn’t have enough error details), it accurately identified the faulty packages and configs. Likewise, I’ve paired it with the Continue.Dev extension on VS Code, and it’s great for autofilling my variable names and changing the syntax to suit different languages.

I also bundled it with a handful of self-hosted FOSS tools

If you’ve read my articles on XDA, you probably know that I use LLMs with some LXCs and Docker containers. Well, Qwen3.6 is just as effective for my productivity-focused toolbox. Thanks to the OpenAPI compatible nature of Llama-server, I can pair this LLM with everything from my companion apps Paperless-ngx to good old Open WebUI. I actually paired it with OpenNotebook, and it’s significantly better than any of the other local templates I’ve used for putting my research notes together. Likewise, I also added it to Blinko, where it goes through my notes and answers all my queries.

The best part? Not only can I keep my notes safe from the prying eyes of cloud-based AI models, but I also don’t have to spend any extra money on subscription fees. For example, I feed Claude Code’s error logs every time my home lab shuts down, and without Qwen3.6-35B-A3B I would have to pay for API usage. And since this giant 35B is pretty good for searching, I can just pair it with Open WebUI + SearXNG instead of relying on ChatGPT.