AI tools have become much smarter over the past few years. They understand the prompts better now, so you don’t always have to be extremely descriptive. That said, more detailed prompts always lead to better results. The problem is that being descriptive takes effort. You need to think more, write more, and refine what you’re asking to get the right result. If it’s something repetitive, like summarizing YouTube videos in a specific format, typing the same detailed prompt every time becomes tedious.

One solution is to write the prompt once, save it, and reuse it. You can create your own prompt library and use it for repetitive tasks. That’s what I did for a long time until I discovered Fabric. Fabric is an AI prompt framework that offers a collection of reusable prompts, accessible through the terminal. Instead of writing prompts from scratch every time, it gives you structured instructions for specific tasks. It also works with multiple models, so all you need to do is connect an API key and you’re good to go.

I’ve been using it for a few months now and it’s still reliable on different models. I really wish I found this tool sooner. This existed at the time and it would have made things much easier.

Claude Code works better when you stop asking him to code

Claude Code became much more useful once I stopped treating it as a code generator and started using it to understand projects and terminal chaos.

Fabric is the moderator between you and the AI

This ensures that you get consistent answers

Fabric is an open source AI framework created by Daniel Miessler. It is designed around the idea that AI does not have a capacity problem, but an integration problem, meaning that even though the models are powerful, it remains difficult to apply them consistently in everyday workflows. Fabric solves this problem by organizing prompts into reusable components and making them accessible through a unified system.

At its core, Fabric is a modular framework built around a collection of structured prompts called “Templates”. These templates are essentially pre-made prompt templates designed to solve specific real-world tasks. You can use these templates to summarize content, extract information, generate text, or transform information into different formats.

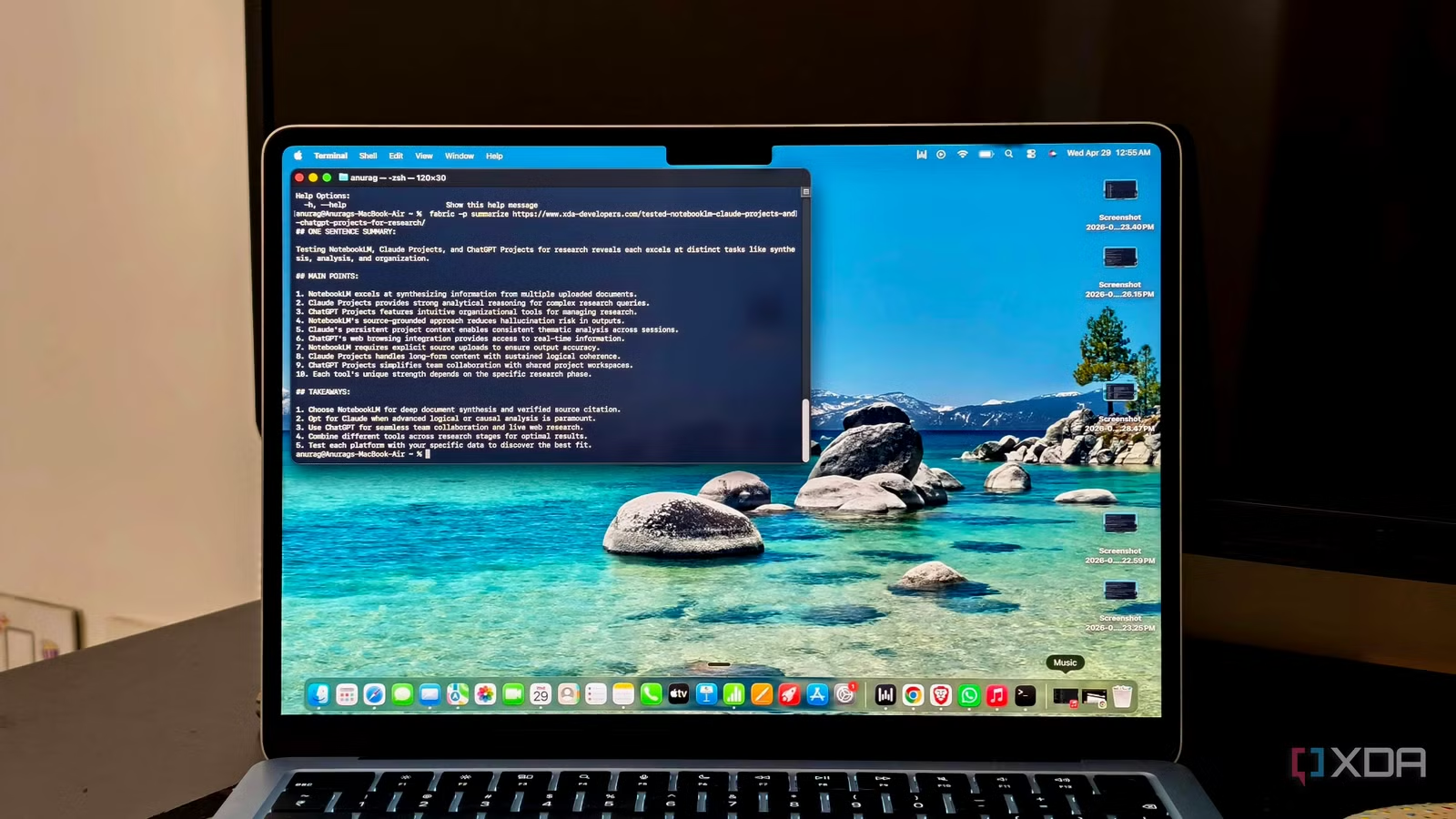

Fabric is primarily accessed through a command line interface, although additional interfaces and support tools can be layered on top of it. The CLI allows you to run models, manage configurations, and interact with different AI models. It supports multiple providers including ChatGPT, Claude, Gemini, and things we left in the past like Copilot.

Setting up Fabric involves downloading a prebuilt binary or installing it through a package manager such as Homebrew.

curl -fsSL https://raw.githubusercontent.com/danielmiessler/fabric/main/scripts/installer/install.sh | bash

Once installed, the tool can be run directly from the terminal. You then configure API keys or connect local models, after which they can start running models on the input data. Fabric supports a wide range of use cases. These include summarizing articles or videos, extracting key ideas from content, generating written drafts, analyzing information, and converting raw inputs into structured outputs. Since templates are reusable and can be chained together, the framework can also support more complex workflows in which multiple steps are executed in sequence.

Using Fabric is super simple

You can also easily connect it to your automation systems

As mentioned above, Fabric works directly from the command line by passing input into a selected model, which defines how the AI should process that input. This usually means redirecting text from a file or URL to Fabric and specifying a template with the -p indicator.

For example, a locally saved article can be summarized by running a command such as chat article.txt | fabric -p summarizewhile a web link can be passed directly for processing using fabric -p summarize

This came in really handy recently when I had to interview about 30 people for a client. This was part of a consumer survey and I asked a fixed set of questions. But when you talk to someone in person, the conversation tends to go in different directions. I had transcripts, but when I ran them directly through the AI, I got slightly different answers each time. However, once I started using Fabric to summarize them, the results were much more consistent.

Fabric also fits naturally into automation workflows because its command-line interface makes it easy to connect to scripts and orchestration tools. For example, I wanted to create a daily brief using n8n that brought together articles, newsletters, tweets, and YouTube videos, all summarized into a consistent format each day. I built this workflow using Fabric with n8n, where Fabric handled the processing.

It acts as a processing layer within a larger pipeline. Automation can be triggered when a new article is saved, a YouTube link is added, or a meeting transcript is generated, then retrieve the content, pass it through a Fabric model such as a summary or information extraction, and store the result in a destination like Notion, Google Docs, or a database.

I tested Claude Code, Codex, Lovable and Replit side by side, and only one felt ready for real work

Than the best AI ship.