I’ve been using Claude Pro long enough that I don’t even really think about how much I’ve gained from the subscription. The five hour reset on the free tier was getting old, so I upgraded, and that was sort of the end of the internal debate. What I didn’t have a clear answer to at first was what exactly I was getting beyond the longer sessions – not in the feature list sense, I know what the features are. It’s more about which of these features I would miss if they disappeared and how they made my workflow possible.

I’ve been using Qwen 3.5 9B locally for a minute now and it’s no longer a secondary experience – it’s an everyday tool at this point. So I swapped it for a week and used it the same way I would normally use Claude, so same workflow, just a different model. Most of the overlap was closer than I expected – until one thing completely was.

I finally found a local LLM that I want to use every day (and it’s not for coding)

Local AI that actually fits into my day

What I actually use Claude for

Well what was I thinking of using it for?

I had a pretty confident answer to this question: the document repositories, the image analysis, the long research sessions, the projects to keep the loaded context in discussions, the interactive visuals released last month. I use it all daily and I would have told you I knew exactly what parts I would miss if they disappeared.

This turned out to be wrong – not completely, but sufficiently. When you actually swap out the tool and run your real workflow through something else, what you thought was carrying isn’t always what actually is. Part of what I was looking for in Claude was more of a habit than a necessity. Qwen covered a game better than I expected, or at least well enough that the gap didn’t really matter in practice. The thing I’m paying for has a more specific form than a simple “Claude function”.

My local configuration

The quick summary

If you’ve read anything else I’ve written about local LLMs, then you already know my setup, so I’ll keep it brief. Qwen 3.5 9B, running via LM Studio on an RTX 3070 with 8GB of VRAM, sitting in a popup of 60,000. The reason it can do this on modest hardware is GDN – a hybrid architecture that keeps memory usage essentially stable as the context increases instead of increasing with each token like a standard transformer would. This is the reason why I upgraded from gpt-oss 20B a while ago.

The other thing worth mentioning is that this is not a new installation. I’ve been using this model for a while now, so I have an idea of where it hits the walls and how to trigger it correctly (although I’m discovering better local AI habits every day). The thing is, I didn’t go into the comparison blind and already had an idea of how to handle Qwen.

Where my local LLM held up and where it didn’t hold up

It went better than expected, but also worse than expected

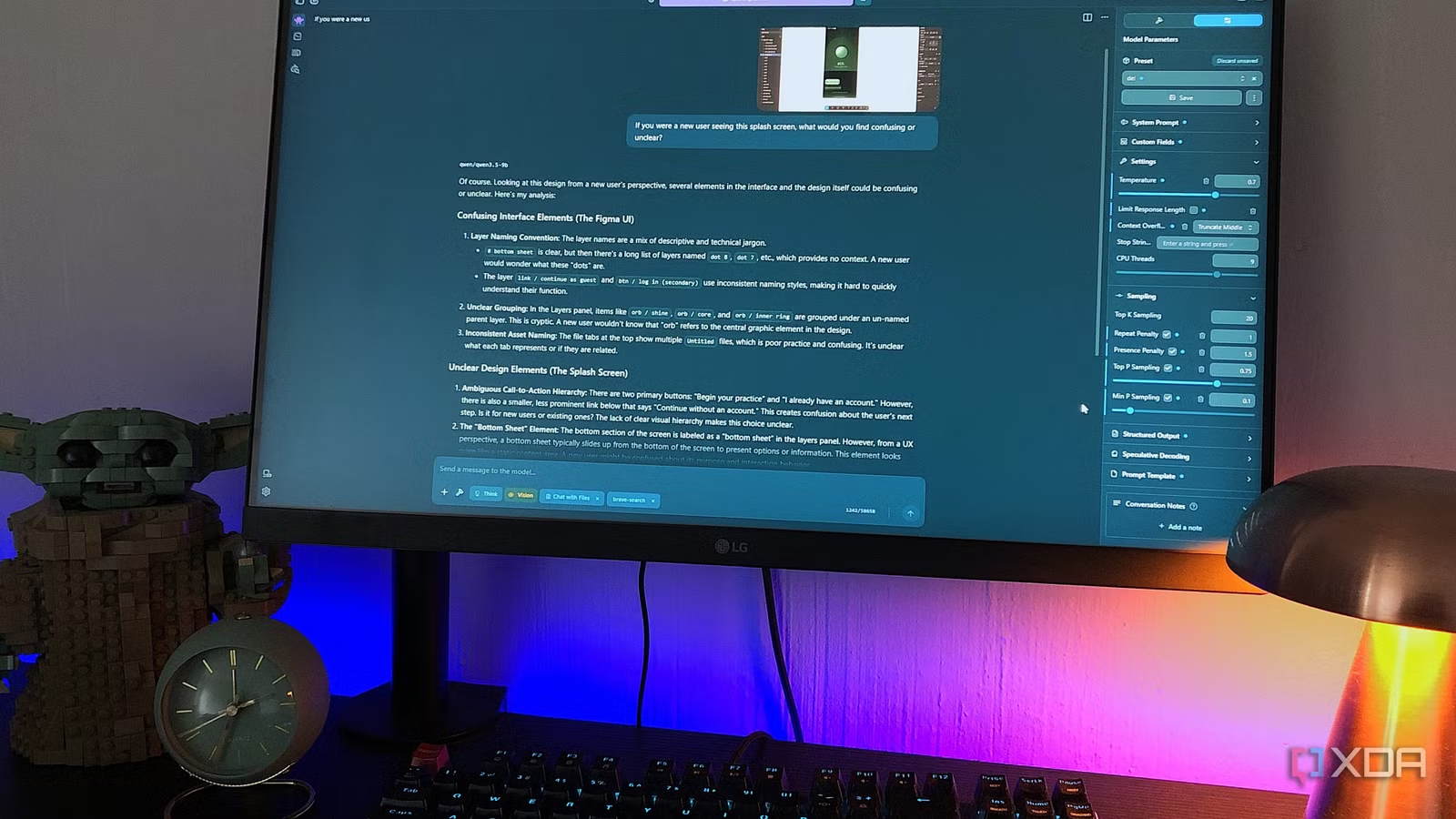

The analysis of the images was the first real surprise. I use this constantly in Claude – instead of writing a long, tedious prompt, I just to show Claude what I’m working with by adding some screenshots to the chat. Qwen also handles it well – as in, really well. It can read software from interface screenshots even without context, describe scenes accurately, point out design inconsistencies, all of that.

Round-trip searches and document management are also suitable. The biggest difference here is how you phrase your prompts. With Claude, I can get into a mess and he will know what I mean. Local templates don’t give you much room to be that complicated, because they interpret your prompts more literally, so you’ll need to adopt a prompting habit focused on clarity. But once you do, it can handle more than you think. I’m talking about everyday thinking partner type things, like working on a concept you’re stuck on or summarizing something long. He understood every angle I presented to him and gave me the explanations and comparisons I needed. I still like Claude’s more friendly, conversational style, but that’s just a preference and not a reflection of Qwen’s abilities.

Of course, freedom is an area in which any local LLM can progress. There’s no continuous window usage watching over your shoulder, no additional charges to your card, and no worry that your conversation might be kept on an unknown server for years. This makes you less monitored in your workflow.

Then there was the render loop when it came to visualization and prototyping.

Qwen can write the HTML code and I can open it in my browser; it’s not the wall. The problem is that rendering a visual, interactive version locally means either struggling with Open WebUI to render the code, which doesn’t connect cleanly to LM studio, or rebuilding my entire local setup around Ollama, which is the preferred backend for Open WebUI. Even though the setup went smoothly, Open WebUI artifacts depend on the template meeting exactly the right format to trigger the rendering panel, and a general-purpose local template like 3.5 isn’t tuned for that like Claude is.

Claude does it every time. I drop in a screenshot or brief, it creates something interactive in the artifacts panel without me having to ask, and I can iterate cleanly from there. For someone who uses Claude primarily as a design learning tool, I’m paying for this configuration-free reliability.

Subscription makes more sense now

The pop-up is the other shortcoming worth mentioning, although I saw it coming. I can’t push Qwen even halfway through Claude’s context on my hardware. This just means my chats were cut short sooner than I had hoped. This is not a surprise, just a real limit that deserves to be frank.

The rendering workflow is the thing I couldn’t get around. Everything else was close enough to make it a little harder to justify subscribing on these items alone. But this uniquely designed workflow, working reliably every time with no setup, is what twenty dollars a month actually buys.

- Operating system

-

Windows, macOS

- Individual pricing

-

Free plan available; Pro plan at $17/month

- Group rates

-

$100/month per person for the Max plan